For the first time, Massachusetts Institute of Technology (MIT) neuroscientists have identified a population of neurons in the human brain that lights up when we hear singing, but not other types of music. These neurons, found in the auditory cortex, appear to respond to the specific combination of voice and music, but not to either regular speech or instrumental music. Exactly what they are doing is unknown and will require more work to uncover, the researchers say.

“The work provides evidence for relatively fine-grained segregation of function within the auditory cortex, in a way that aligns with an intuitive distinction within music,” says Sam Norman-Haignere, PhD, a former MIT postdoc who is now an assistant professor of neuroscience at the University of Rochester Medical Center and led the study.

The work builds on a 2015 study in which the same research team used functional MRI (fMRI) to identify a population of neurons in the brain’s auditory cortex that responds specifically to music. In the new work, the researchers used recordings of electrical activity taken at the surface of the brain, which gave them much more precise information than fMRI.

“There’s one population of neurons that responds to singing, and then very nearby is another population of neurons that responds broadly to lots of music. At the scale of fMRI, they’re so close that you can’t disentangle them, but with intracranial recordings, we get additional resolution, and that’s what we believe allowed us to pick them apart,” says Norman-Haignere.

Neural Recordings

In their 2015 study, the researchers used fMRI to scan the brains of participants as they listened to a collection of 165 sounds, including different types of speech and music, as well as everyday sounds such as finger tapping or a dog barking. For that study, the researchers devised a novel method of analyzing the fMRI data, which allowed them to identify six neural populations with different response patterns, including the music-selective population and another population that responds selectively to speech.

In the new study, the researchers hoped to obtain higher-resolution data using a technique known as electrocorticography (ECoG), which allows electrical activity to be recorded by electrodes placed inside the skull. This offers a much more precise picture of electrical activity in the brain compared to fMRI, which measures blood flow in the brain as a proxy of neuron activity.

“With most of the methods in human cognitive neuroscience, you can’t see the neural representations,” Kanwisher says. “Most of the kind of data we can collect can tell us that here’s a piece of brain that does something, but that’s pretty limited. We want to know what’s represented in there.”

Electrocorticography cannot be typically be performed in humans because it is an invasive procedure, but it is often used to monitor patients with epilepsy who are about to undergo surgery to treat their seizures. Patients are monitored over several days so that doctors can determine where their seizures are originating before operating.

During that time, if patients agree, they can participate in studies that involve measuring their brain activity while performing certain tasks. For this study, the MIT team was able to gather data from 15 participants over several years.

For those participants, the researchers played the same set of 165 sounds that they used in the earlier fMRI study. The location of each patient’s electrodes was determined by their surgeons, so some did not pick up any responses to auditory input, but many did. Using a novel statistical analysis that they developed, the researchers were able to infer the types of neural populations that produced the data that were recorded by each electrode.

“When we applied this method to this data set, this neural response pattern popped out that only responded to singing,” Norman-Haignere says. “This was a finding we really didn’t expect, so it very much justifies the whole point of the approach, which is to reveal potentially novel things you might not think to look for.”

That song-specific population of neurons had very weak responses to either speech or instrumental music, and therefore is distinct from the music- and speech-selective populations identified in their 2015 study.

Music in the Brain

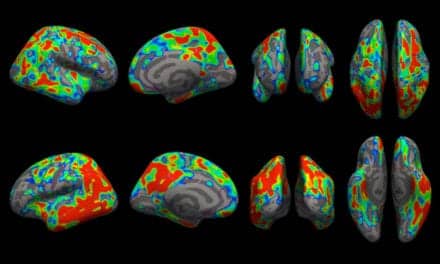

In the second part of their study, the researchers devised a mathematical method to combine the data from the intracranial recordings with the fMRI data from their 2015 study. Because fMRI can cover a much larger portion of the brain, this allowed them to determine more precisely the locations of the neural populations that respond to singing.

“This way of combining ECoG and fMRI is a significant methodological advance,” McDermott says. “A lot of people have been doing ECoG over the past 10 or 15 years, but it’s always been limited by this issue of the sparsity of the recordings. Sam is really the first person who figured out how to combine the improved resolution of the electrode recordings with fMRI data to get better localization of the overall responses.”

The song-specific hotspot that they found is located at the top of the temporal lobe, near regions that are selective for language and music. That location suggests that the song-specific population may be responding to features such as the perceived pitch, or the interaction between words and perceived pitch, before sending information to other parts of the brain for further processing, the researchers say.

The researchers now hope to learn more about what aspects of singing drive the responses of these neurons. They are also working with MIT professor Rebecca Saxe’s lab to study whether infants have music-selective areas, in hopes of learning more about when and how these brain regions develop.